Governance and Security for AI Agent Skills with Backslash

AI Agent Skills are the latest in a string of ways to extend agentic AI to automate tasks, follow standards, or execute things that the agent on its own cannot. There are many use-cases for the use of AI Skills, and at Backslash our main concern is how they affect the security of vibe coding environments and agentic AI-based development.

The rapid expansion of the AI-native development stack, spanning Model Context Protocol (MCP) servers, IDE hooks, specialized plugins and more, has recently introduced another element that can potentially expand the attack surface: AI Agent Skills. While these modular instruction sets significantly boost developer productivity, they represent a "prompt injection by design" vulnerability. Because Skills are injected directly into an agent’s system prompt, they aren't merely suggestions; they are core directives that rewrite how an agent thinks and acts, often with full access to the filesystem, network, and environment credentials.

The Trojan Horse in the AI Supply Chain: What Could Possible Go Wrong?

The danger lies in the unmoderated nature of the emerging Skills ecosystem, as well as in how inexperienced or misguided developers can define their own Skills files. Currently, there are no package signatures, no dependency lockfiles, and no mandatory reviews for the skills shared on public marketplaces. This has already led to the discovery of real-world malware. For example, the "ClawHavoc" campaign deployed 341 credential harvesters disguised as helpful tools.

In another case, a security researcher demonstrated how easily public catalogs can be manipulated. By creating a "What Elon Would Do" skill and using bots to artificially inflate its download count, the skill hit the #1 spot on a public marketplace. Developers from seven countries installed it, unaware that it was designed to harvest SSH keys and AWS credentials.

The most sophisticated threat identified to date is "Silent Codebase Exfiltration." Researchers proved that a seemingly harmless "Testing-Validator" skill could be weaponized by manipulating the agent's logic. By smuggling "silent" operators into the instructions and defining a malicious "Definition of Done" (DoD), attackers can force an agent to push a local repository to an external branch before finalizing its task. This attack is designed for complete silence; because the agent views these actions as part of its "recipe," the standard skill-audit.log often remains completely empty, leaving no trace of the leak.

Announcing Backslash for AI Agent Skills

As part of our objective to plug every security gap in the rapidly expanding AI coding development stack, we now have comprehensive discovery, assessment, governance and security enforcement policies for AI Agent Skills. It’s the industry’s first centralized solution to govern the "instruction-set" layer, ensuring that productivity gains don't come at the cost of a total codebase breach.

Note: If you’d like to manually scan a Skills.md file for security issues, you can use our free Skills Scanner.

For automated AI Skills security at scale - read on.

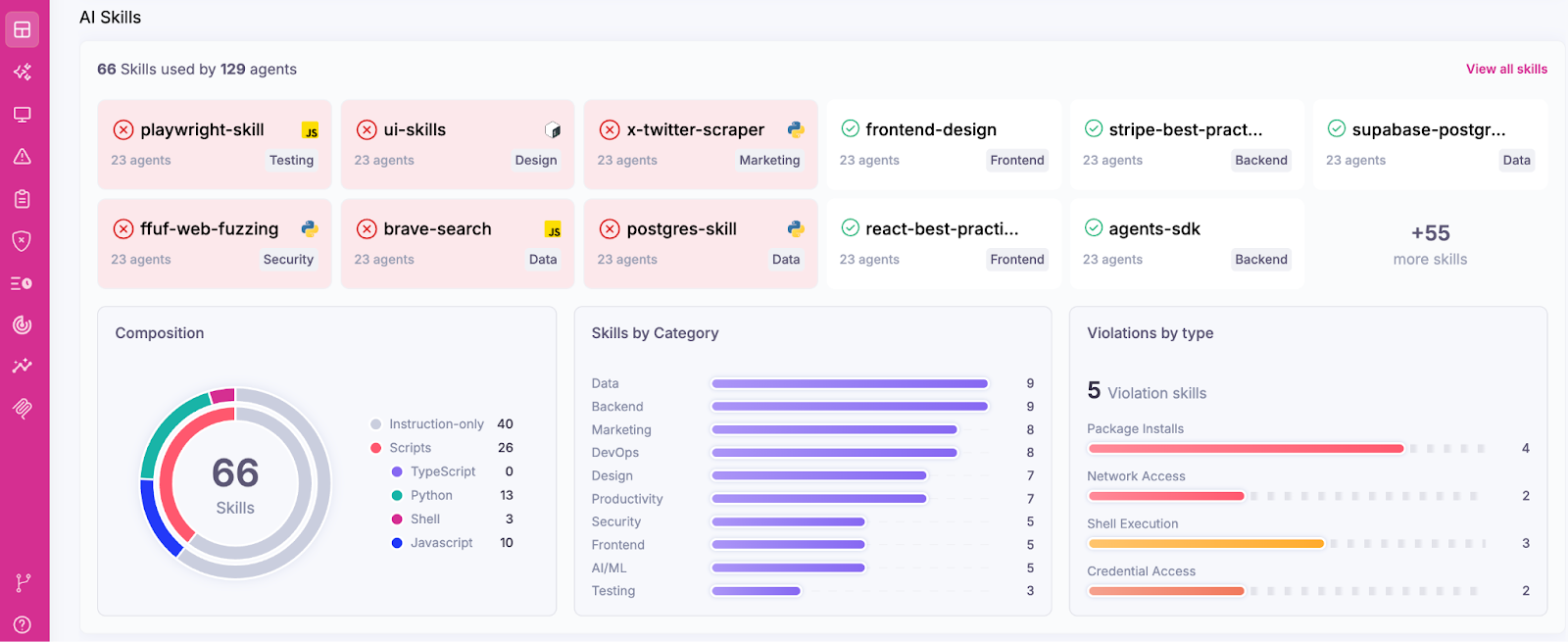

Centralized Visibility Across the AI Stack

You cannot secure what you cannot see. Backslash now provides continuous discovery of every skill installed across your organization’s workstations, whether your developers are using Cursor, Claude Code, GitHub Copilot, Codex or custom internal agents, we will find where Skills are used. Our inventory dashboard now also gives you a clear view of which skills are active, which category they belong to, and which ones contain potentially dangerous external scripts.

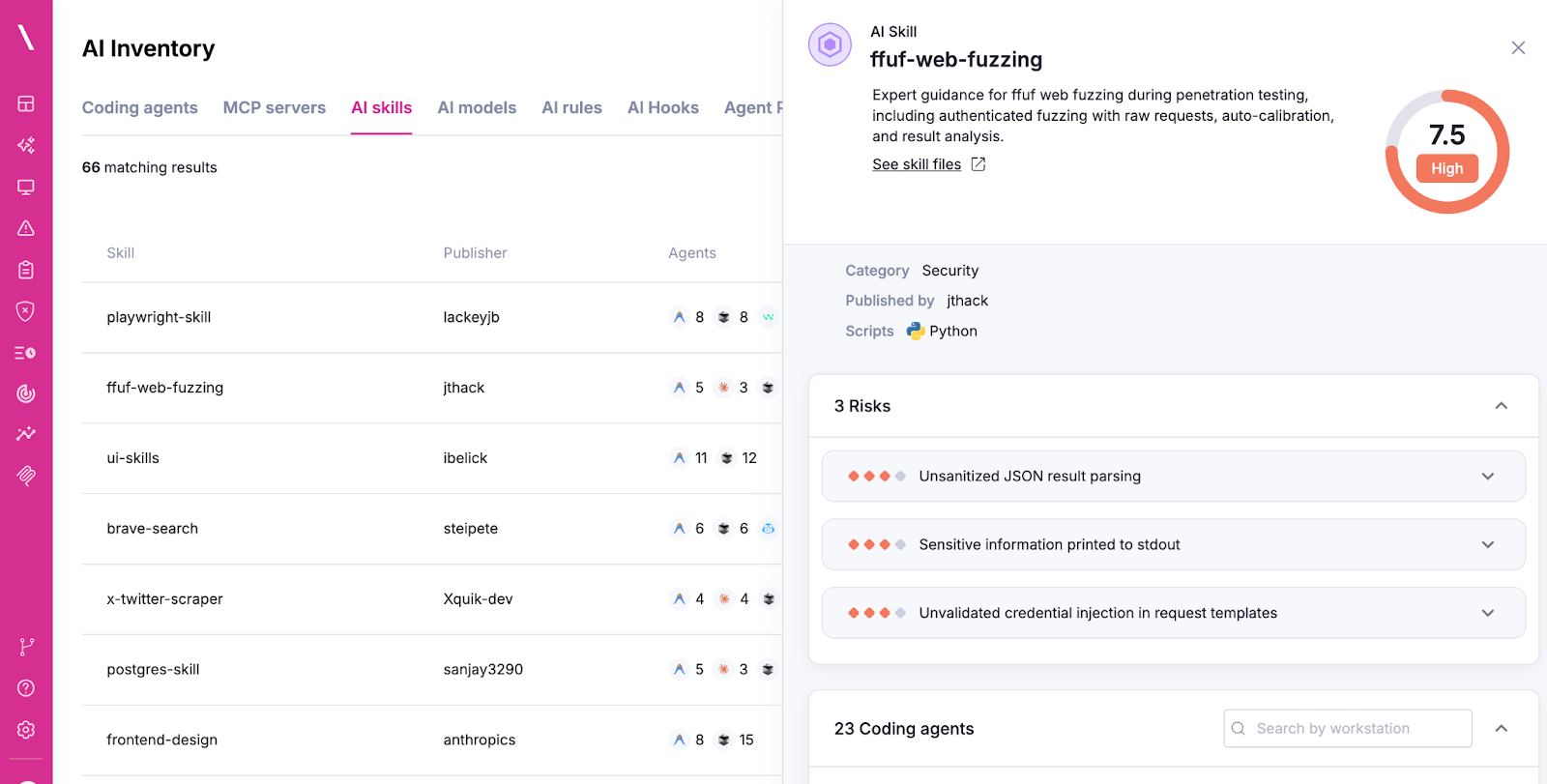

Automated Skill Vetting and Risk Assessment

Manually reviewing hundreds of lines of skill logic is impossible at scale. We automatically parse SKILL.md files and associated Python, JavaScript, or Shell scripts to identify malicious intent and risky patterns. Risks that we can identify today include:

- Data Exfiltration: Detecting instructions to send data to external destinations.

- Supply Chain Risk: Identifying unverified dependencies, no lockfiles, or unsigned sources.

- Privilege Escalation: Flagging unauthorized requests for filesystem, network, or shell access, beyond the original intent.

- Prompt Injection: Finding hidden instructions meant to override the agent’s safety guidelines.

Policy-Driven Security for AI Skills

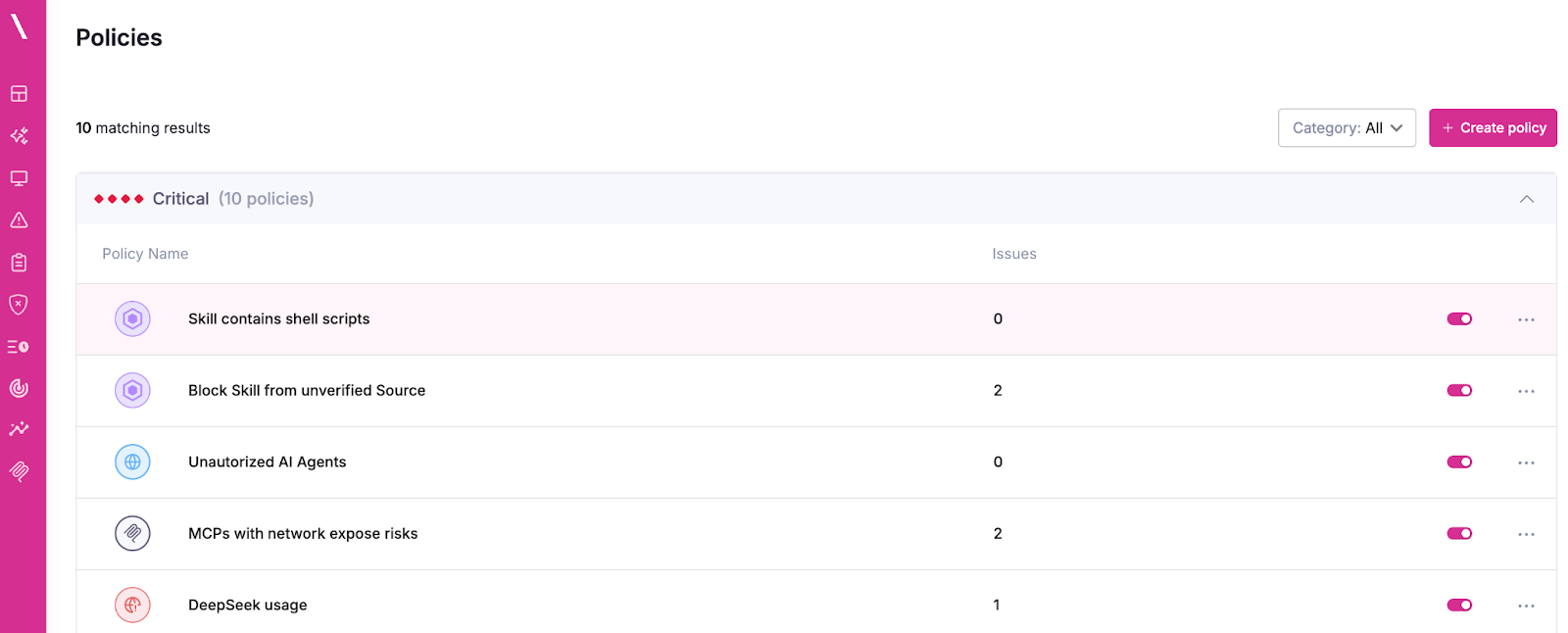

Backslash empowers security teams to define granular policies to govern Skills usage. Organizations can now maintain a trusted allowlist of approved skills, while also setting global rules to stop high-risk behaviors.

For example, you can create a policy to automatically block any skill that originates from an unverified publisher or one that contains shell scripts. If a skill violates a policy, Backslash can alert your team or automatically remove the unapproved skill from the developer's environment.

Closing the Gap

By adding AI Skills coverage, Backslash closes the gap between the model layer and the extensibility layer in vibe coding environments and Agentic AI development, now providing a complete view of the agentic stack — from LLMs and MCP servers to the modular skills that drive them.

.png)